DLP Threat Modeling for the Age of AI

AI is changing how data leaks. We built a framework that helps security teams map risks, test controls, and protect data across AI systems.

A fundamental change in how data moves inside organizations occurred in the last year.

The use of generative AI tools is increasing, with AI becoming embedded directly in everyday workflows. Tools like Microsoft Copilot, ChatGPT, Gemini, and countless AI-powered agents are integrated into browsers, SaaS apps, developer environments, and internal systems. In many cases, enabled by default.

As this adoption rises, so does the threat of data loss.

For years, DLP was built around a relatively simple model: data is stored, transferred, and occasionally shared. This meant monitoring files, scanning for patterns, and enforcing policies at known control points, such as email, uploads, and endpoints. That model, which had many flaws to begin with, no longer holds.

With AI, we started constantly seeing how:

- Employees paste internal documents into AI chats to summarize/rewrite them

- Teams adopt AI tools outside approved channels without oversight

- Agents interact with internal systems and external APIs in ways that are hard to trace

- Data flows through browser extensions, plugins, and embedded AI features

Data is constantly being interpreted, transformed, and recombined, creating a new kind of risk surface that doesn’t map to traditional methods.

As we spoke to security teams, a pattern emerged. They were missing controls and visibility, but more importantly, there was often no clear way for them to even describe the problem:

What are the actual risks introduced by AI? Where do they occur? How do they differ from traditional data loss scenarios? And, most importantly, how do you prioritize what to fix?

There was no shared map of this new landscape or a structured way to reason about how data leaks in AI-driven environments. So we built one.

A Modern Data Loss Threat Modeling Framework

At its core, the goal was simple: create a structured way to map how data actually leaks in AI-driven environments, and make it usable for security teams. The full framework is available at dlptest.io/ai-threat-model

At a high level, the framework is simple. It breaks the problem down into three parts:

- Types of risks (what can go wrong)

- Concrete scenarios (how it happens in practice)

- Surfaces (where it happens)

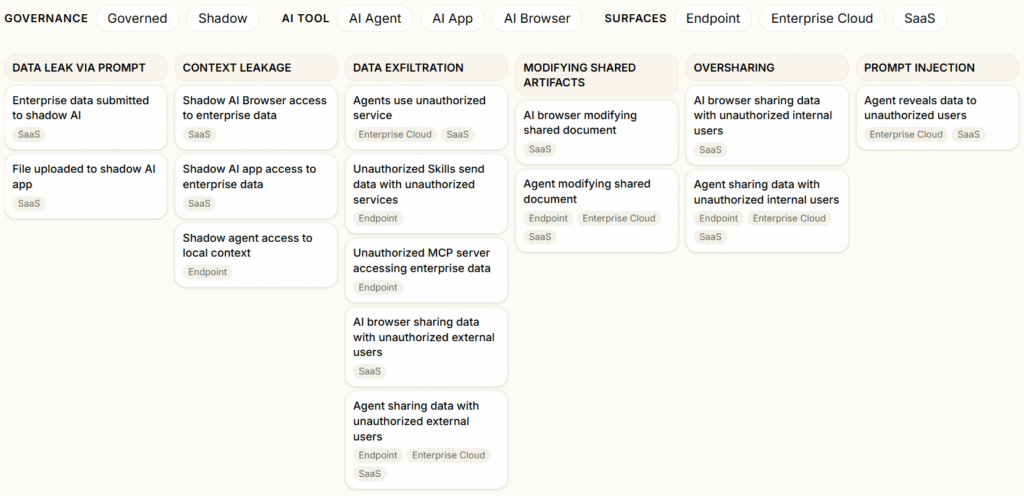

Risks

We identified a set of core data loss risks that are specific to AI:

- Data leak via prompt

- Context leakage

- Data exfiltration

- Modifying shared artifacts

- Oversharing

- Prompt injection

These represent the different ways sensitive data can be exposed when interacting with AI systems.

Scenarios and Surfaces

Each risk (Data leak via prompt Data exfiltration) is presented as multiple occurrences, and tied to where it occurs – SaaS, Endpoints, and Enterprise cloud, as well as the different AI interfaces: AI agents, apps, and browsers.

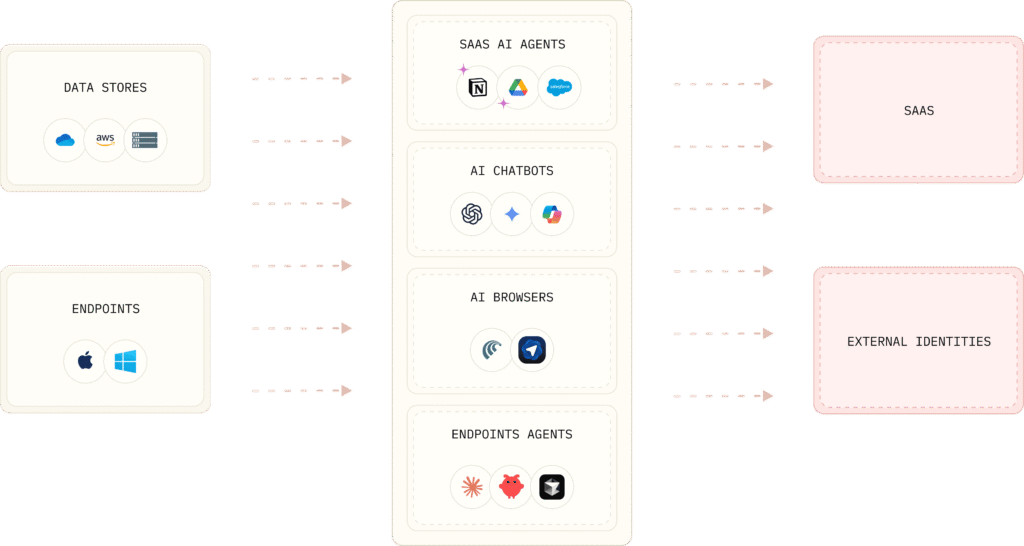

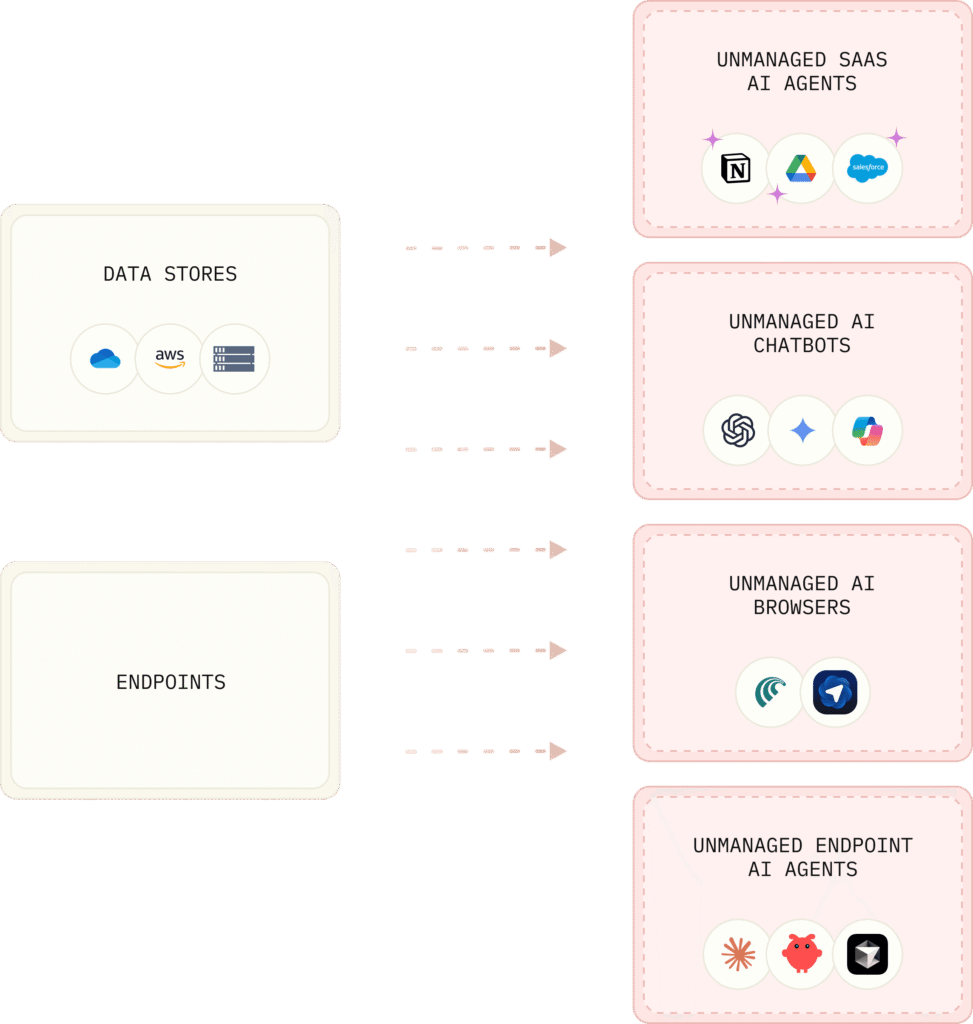

From Data Flow to Control

Understanding the risks is only one part of the problem. The next step is understanding how data actually flows and where control needs to be applied.

At a basic level, enterprise data starts in two places:

- Data stores (Google Drive, SharePoint, S3, etc.)

- Endpoints (laptops, browsers, local apps)

From there, it flows into different AI surfaces:

- SaaS AI agents

- AI chatbots

- AI browsers

- Endpoint-based agents

In many environments today, these flows are completely unmediated. Data moves directly from enterprise systems into AI tools – both managed and unmanaged.

At this stage, every interaction becomes a potential data leak into external SaaS or identities. There are no inspection points, no enforcement, and no clear understanding of what is leaving the organization.

Adding Controls

To cover this entire flow, controls need to be applied at multiple points.

At the SaaS layer, SaaS DLP governs how data moves between enterprise data stores and cloud applications.

At the endpoint layer, Endpoint DLP governs how data is accessed and used on user devices.

These controls establish visibility and enforcement across traditional data paths.

Controlling AI Interactions

AI introduces a new interaction layer that sits between data and external systems.

To fully cover this layer, additional controls are required:

- Inspecting prompts and responses

- Monitoring agent behavior

- Controlling plugin and API access

The LLM firewall governs how data flows through AI systems themselves, rather than just the systems around them.

When combined, these controls cover:

- Data access at the source

- Data usage on endpoints

- Data movement across SaaS

- Data interactions within AI systems

This creates full coverage across the AI data flow.

How to Use This Framework

This framework is meant to be practical, and can be used to:

- Map your exposure – Identify which AI tools, agents, and surfaces interact with your data

- Identify gaps – Understand which scenarios you cannot detect or control today

- Prioritize controls – Focus on the risks that matter most in your environment

- Evaluate vendors – Compare solutions based on real scenarios, not generic capabilities

At its core, it aims to shift the conversation from: “Do we have DLP?” to: “Which AI-driven data loss scenarios can we actually prevent?”

As you go through the framework, a few key questions emerge:

- Do we know which AI tools access enterprise data?

- Can we inspect AI prompts and responses?

- Can we detect AI agents accessing internal systems?

- Do we control where AI plugins send data?

- Can we monitor AI-driven API calls?

If these questions are difficult to answer, there are likely gaps in visibility and control.

Validating the Scenarios

While the framework helps map and reason about AI data loss risks, dlptest.io also allows you to test those scenarios in practice with modern, relevant use cases, as well as a rich library of structured and unstructured data test scenarios.

Try It Yourself

We built this framework to make the problem visible and actionable.

Employees are already using AI tools. Agents are already interacting with systems. Data is already flowing through prompts, APIs, and plugins. The question is no longer whether this is happening, but whether you understand it and can control it.

We hope this framework allows security teams to map their own environments, walk through the scenarios, and evaluate where controls are missing. Try it at dlptest.io/ai-threat-model